Ten31 Research: Our Database, Your Problem

Stablecoins, Blockchains, and the Future of the Digital Dollar

Over the past two years, and especially since the beginning of Trump 2.0, it has become de rigueur to assume and confidently proclaim that stablecoins are set to become the “killer app of crypto.” Bellwethers of traditional finance from Larry Fink to Jamie Dimon have pivoted from begrudging acceptance to outright promotion of stablecoins, while supportive statements by the Treasury Department and Fed Governors have poured fuel on the fire, hinting at stablecoins as the marginal release valve for the accelerating flood of US debt issuance. Even last year’s flagship Bitcoin Conference tilted heavily toward stablecoin content, and enthusiastic acolytes like Tom Lee have filled up long blocks of CNBC airtime promoting their visions for a future of dollars running on blockchain rails and banking stacks reworked around projects like Ethereum and Solana.1

There may be elements of truth beneath some of these headlines, and stablecoins have no doubt shown robust traction for several use cases – in particular, offering a new form of dollar access to economies with weak local banking and currency options – but there is strong reason to believe blockchain-based stablecoins as currently conceived and implemented are far from the panacea their advocates claim. The near- to medium-term future will probably include an expanded role for various (and variously dystopian) incarnations of a digital dollar, but the inherent trade-offs of blockchains mean that their applicability to dollar-linked payments and banking infrastructure will likely be limited, and long-term value capture will shift away from the blockchain projects that many analysts and investors too complacently view as the “picks and shovels” of the emerging digital dollar ecosystem.

What We Talk About When We Talk About Stablecoins: Some Taxonomy

Before diving in, we should clarify a few key terms that define our focus for this discussion. Most importantly for our purposes, a stablecoin here refers to a digital asset issued by a counterparty intended to be convertible at par with one US dollar, and where that “peg” is maintained largely or entirely by an issuer’s highly liquid, dollar-denominated cash collateral (typically some combination of bank deposits, short-dated US Treasury bills, and / or money market funds). This definition covers the most widely used stablecoins in circulation today and aligns with emerging regulatory frameworks like the GENIUS Act in the US. Some issuers have also launched so-called “algorithmic” stablecoins that attempt to provide a synthetic dollar peg using assets outside the dollar system, but these are famously fraught with operational risks and design difficulties, and in any case account for a small share of global stablecoin volume, so we will look exclusively at conventional, fiat-backed stablecoins like Tether’s USDT and Circle’s USDC.

Throughout the discussion, we will also make frequent reference to “public blockchains,” which – in contrast to permissioned networks run by whitelisted consortia that look more like private shared databases – are transparent and openly accessible networks that attempt to offer permissionless, globally available, peer-to-peer transactions on distributed ledgers without reliance on central operators. The most prominent examples of such public blockchains are Ethereum, Solana, and Tron, and these systems have historically accounted for the vast majority of global stablecoin activity. Most of our commentary will therefore reference these ecosystems as touchstone examples, but the framework is broadly applicable across blockchains. Furthermore, we will be looking at the public blockchain use case for stablecoins specifically; attentive readers may intuit that we are skeptical of most other blockchain claims (e.g. the tokenization of “real-world assets”), but all of our evaluation here will focus only on stablecoin applications. To put a finer point on it, our purpose is not to evaluate whether some form of digital dollar will gain traction, but specifically whether public blockchains will actually be necessary for and durably benefit from any such traction in the long term.

Finally, our commentary here will be limited to popular claims about what stablecoins transmitted on public blockchains can functionally accomplish. There are a variety of more abstract (though no less critical) questions that won’t be addressed here – e.g. the long-term security implications of the Proof of Stake consensus mechanism used in many of these blockchains; the viability of any blockchain whose endogenous settlement unit does not successfully compete as money; and many others – but we encourage interested readers to consult the linked resources for further discussion.

Is “The Blockchain” In the Room with Us Right Now?

It’s important to start by unraveling one common piece of shorthand that plagues a good deal of mainstream stablecoin discourse, especially among generalist investors and observers. Many commentators on this topic tend to draw comparisons between “the blockchain” and “the internet,” creating the impression that the blockchain is a comparable type of abstract, general-purpose software overlay that can connect a diverse, global, arbitrarily large set of endpoints to facilitate transactions. However, this ignores a key fundamental difference: the internet is one, open, interoperable protocol (or, more accurately, one protocol stack) around which billions of users have converged for standardized information transfer, resulting in massive unifying network effects. In stark contrast, there is no “the blockchain,” only a fragmented collection of individual blockchains – Ethereum, Solana, Tron, and thousands more – each with its own set of protocol rules, design choices, and very limited native interoperability. The next time you hear that “the future of finance is on the blockchain,” your first question should be: “Which one?”

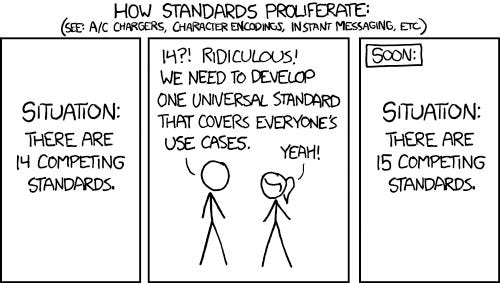

Far from just a semantic dispute, this confusion actually reveals a significant misplaced assumption that all the relevant computational and security work needed to facilitate stablecoin transactions can just be outsourced to some vaguely defined technological deus ex machina. In reality, stablecoins do not run on “the blockchain,” but rather on “a blockchain”: any given stablecoin transaction takes place on a single, specific chain, and those individual chains are effectively each their own incompatible walled gardens. For example, a balance of Tether’s USDT – the most widely used stablecoin globally – held on the Tron blockchain is not natively fungible with a USDT balance on Ethereum or Solana. Interactions between USDT holders on different chains require the use of “bridges” and / or third-party intermediation of some kind, exposing users to additional fragility and risk: these systems have have driven at least $3 billion of lost user funds over the past several years, underscoring just how far these projects are from the internet’s robust, standardized interoperability. Furthermore, the number of such proposed bridging solutions has exploded in the last several years, exposing participants to more operational overhead and highlighting the classic dilemma of protocol fragmentation:

Stablecoin issuers are therefore not simply leveraging “the blockchain” as a neutral, universal rail. They are instead faced with a set of individual networks that are not only siloed, but also come laden with technical and social risks that any stablecoins riding on them inherit. The most notable historical example of these risks is probably Ethereum’s DAO hack – which compromised 3.6 million ETH (worth $60 million at the time) – and the subsequent blockchain hard fork orchestrated by a small set of developers and early investors, effectively reversing protocol-valid transactions (and the “code is law” ethos of the chain) by ex post centralized fiat. The record of other blockchains, meanwhile, is littered with long episodes of severely degraded performance and outages, including dozens of such incidents on Solana and Tron, and countless more across the long tail of less prominent blockchains. Going forward, the performance of any of these chains will always rest on the quality of the roadmap – which in many cases contemplates material increases in complexity over time – and each blockchain dev team’s ability to brute-force updates and patches as necessary, a dynamic that by itself seriously calls into question the ostensible “neutrality” of these rails (addressed further below). On net, this all speaks to a much more fragmented, governance-dependent, and operationally fragile foundation than the clean “money internet” framing would suggest, undermining the mainstream presentation of a unified, reliable substrate for next-generation financial infrastructure.

However, even if we were to assume the advent of frictionless bridges and flawless technical performance going forward, stablecoins would still face a much more fundamental challenge – “blockchain technology” is not the right tool for this job.

Blockchains Don’t Scale

Following decades of precedent computer science innovations, Satoshi Nakamoto invented the original “blockchain” as a purpose-built mechanism to facilitate the operation of the bitcoin network. Satoshi’s design made several specific engineering and incentive choices to solve the “double-spend problem” which had plagued prior attempts at digital currency – before bitcoin, no such currency had been able to ensure a user couldn’t spend a given digital token more than once without some kind of central, trusted clearing service overseeing all transactions. Through the use of an openly auditable ledger with a complete network transaction history constantly verified by all users, where each new “block” of transactions in the “chain” is cryptographically linked to the prior block through unforegeably costly computation tied to real-world energy use (“Proof of Work”), bitcoin brought about the world’s first digital currency with provable resistance to double-spending. Like gold or physical cash, bitcoin is a bearer asset, meaning physical possession is fully tantamount to ownership; but unlike physical cash, bitcoin has no issuer and is thus not the liability of any counterparty. These properties allow bitcoin to be stored and exchanged on a peer-to-peer basis, all without a trusted intermediary overseeing the ledger and authorizing transactions. This has proven to be an extremely robust design, but it also comes with a set of very significant trade-offs that all other blockchains necessarily inherit.

Perhaps the most fundamental such trade-off is decentralization versus scalability. To ensure the resilience of the properties that motivated bitcoin’s creation and have allowed it to accrue value – permissionless access and verification by a global network, censorship resistance, and the enforcement of its fixed supply – anyone must be able to transact on the network and audit its data without excessive barriers to entry. This means that running a local instance of bitcoin must have sufficiently lightweight memory and bandwidth requirements that any participant with consumer-grade hardware can download bitcoin’s blockchain and reliably verify the validity of newly proposed blocks. Otherwise, the network’s data and bandwidth burdens would ultimately balloon to functionally exclude the average user, inexorably trending toward centralization of block verification and thus a breakdown of the incentive balance that allows the protocol to facilitate its purpose. If the data and performance requirements are such that you need permission to use someone else’s computer (or, in practice, their highly specialized data center) to broadcast your transactions and verify your balances, you ultimately have no check on the enforcement of protocol rules. Sovereign is he who validates the blocks.

The trade-off here is that this directly leads to necessary constraints on the blocks in the chain; they can’t have too much data per block, and they must come in slowly enough to be reliably validated by low-grade hardware. The actual “right” limits on these metrics has been the subject of much debate, but the intuition is that the throughput of any blockchain that hopes to preserve permissionless participation without reliance on trusted intermediaries will cap out at some point orders of magnitude below the threshold of global transaction volume. Ergo, the properties that make a blockchain useful in the first place necessarily put significant limits on its scalability. There is nothing special about “blockchains” in the abstract that enables faster, more efficient, or more equitable exchange – in fact, the opposite is true. As Saifedean Ammous puts it:

“Bitcoin’s mechanism for establishing the authenticity and validity of the ledger is extremely complex and complicated, but it serves an explicit purpose: issuing a currency and moving value online without the need for a trusted third party. “Blockchain technology,” to the extent that such a thing exists, is not an efficient or cheap or fast way of transacting online. It is actually immensely inefficient and slow compared to centralized solutions. The only advantage that it offers is eliminating the need to trust in third-party intermediation. The only possible uses of this technology are in avenues where removing third-party intermediation is of such paramount value to end users that it justifies the increased cost and lost efficiency.” [Emphasis added]2

Say It With Me: Blockchains Don’t Scale

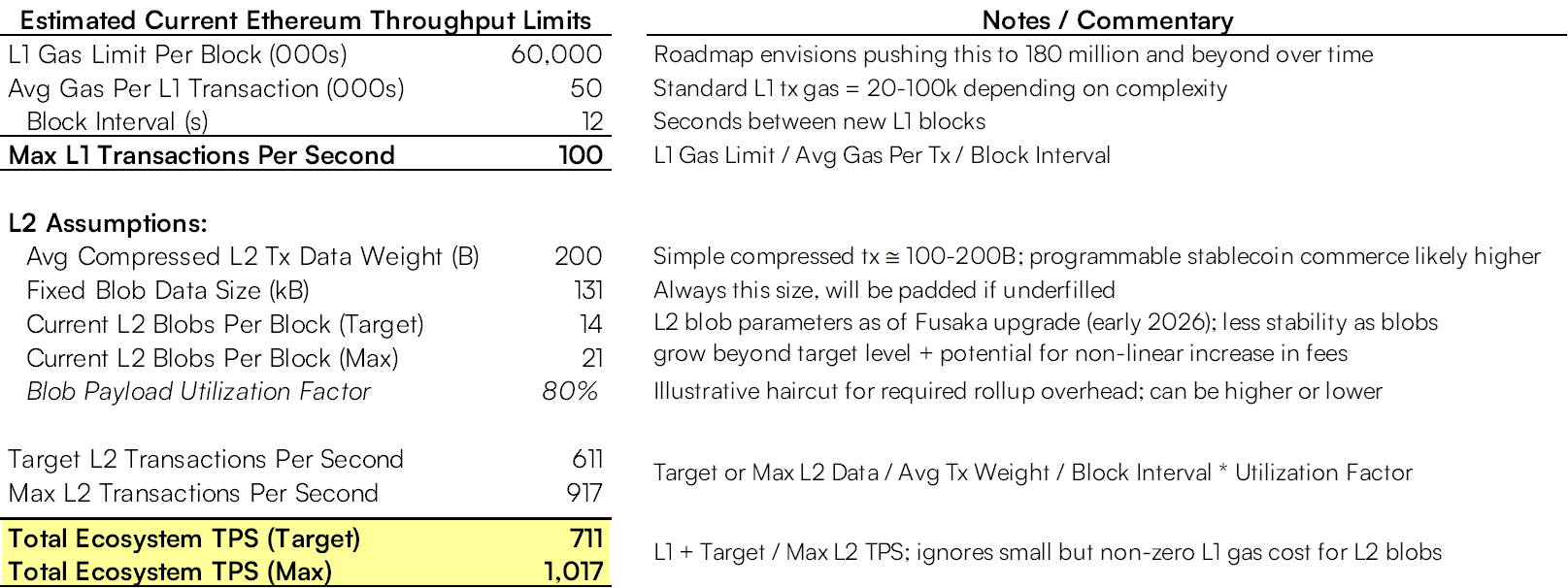

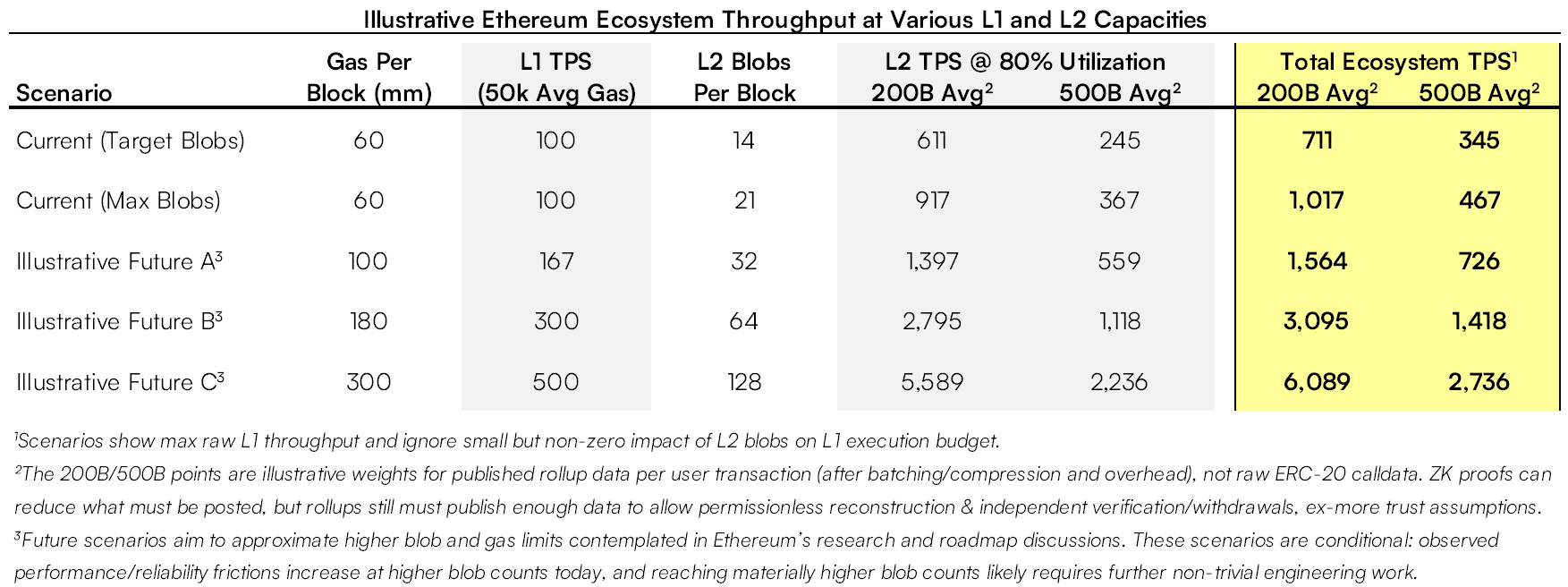

These inherent scaling limitations have been very apparent on all the blockchains that have attempted to serve as stablecoin substrates – even those that were ostensibly designed to be faster and more scalable than bitcoin – because with any such design, there is only so much blockspace (loosely, data capacity) available per unit of time. As a rough framing heuristic for these limits, Ethereum’s blockchain currently facilitates 20-30 transactions per second (“TPS”) on average and supports maximum throughput of ~100 TPS (though fees begin to adjust dynamically higher well in advance of that limit).3 The blockchain’s developers have plans to continue increasing this well into the hundreds, but even this is dramatically below the Visa network’s average TPS of 1,700 (with maximum capacity of 65,000+), global card networks collectively processing 25,000+ TPS, and total worldwide non-cash TPS of 50,000+.4 As a result, Ethereum’s blockchain has historically averaged $1-5 per transaction with spikes up to $50+ even for simple transfers during periods of high congestion. These figures are at best in line with and often significantly above the average per-transaction cost borne by legacy dollar payment rails, despite Ethereum supporting only a negligible fraction of the legacy system volume to which stablecoin enthusiasts aspire.5

Serious Ethereum advocates would no doubt object that most ecosystem participants already expect incremental scaling not through the main Ethereum blockchain (the Layer 1, or “L1”), but rather via so-called Layer 2 (“L2”) mechanisms, which are separate systems (e.g. “rollups”) that process transactions off-chain while anchoring to the L1.6 Essentially, these designs aggregate and execute many transactions off-chain, then periodically post a compact summary to the L1 so that anyone can reconstruct and audit the validity of the data. This “batching” can allow the cost of a single L1 transaction to be amortized across the many constituent transfers within the batch, which can enable realized per-transaction costs as low as ~$0.01 in periods of slack demand. Fundamentally, however, these systems face their own inescapable architectural limits on scalability as usage grows, most notably their “data availability” requirements: because L2s must periodically hit the L1 with their transaction batches and there is only so much L1 blockspace available, higher demand for L2 usage will eventually (and often quickly) drive correspondingly high L1 fees, which L2 providers ultimately pass on to users. This means L2s can temporarily reduce, but ultimately not eliminate, the on-chain cost and congestion that Ethereum would inevitably experience if stablecoin users attempted to transition any meaningful fraction of global transaction volume to the ecosystem.

In practice, L2 systems – which now facilitate the vast majority of total Ethereum ecosystem transactions – have helped boost average realized system-wide TPS to ~350, though the current ceiling for sustained economically meaningful throughput before fees start to rise uncomfortably is likely something less than 1,000.7 This is still orders of magnitude short of accommodating global or even just developed market volumes, particularly since organic, real-world payment flows are often much more complex and data-intensive than many flavors of crypto-native volume (interested readers can peruse Appendix B for some additional detail on these estimates). To be sure, L2s have enabled short bursts of much higher throughput, though in many cases these bursts have unsurprisingly also driven much higher L2 fees, often even exceeding L1 fees.8 Like seats on an airplane or rooms in a hotel, the price of entry can be very low and barely respond to new entrants when demand is not close to the binding capacity constraint, but the price increases nonlinearly as capacity approaches saturation. Any cost advantage versus traditional payment networks today is therefore essentially the artifact of relatively low average demand; in the long run, any sustained, material increases in transaction volume would just lead L2 end users to bid up costs to the marginal user’s next-best alternative (i.e. legacy payment rails).

There are other significant issues to consider with this scaling model, including:

Variable Latency / UX: Due to a volatile fee market, users can easily end up stranded deep in a transaction confirmation queue, a noteworthy UX hurdle for many real-world payments flows that further undercuts the supposed efficiency gains of blockchain transactions relative to legacy rails.

Interoperability Problems: Just as the proliferation of blockchains fragments liquidity and creates complexity at the L1 level, the growth of diverse types of L2s – which already number in the dozens today – presents additional challenges for interoperability within the Ethereum ecosystem, as users must rely on bridging services to transact among an array of L2s with varying fee mechanisms and design assumptions.

Mass Exit: A key element of the L2 scaling model required for durably “permissionless” use of these systems is the assumption that users can always unilaterally exit to the L1 at their discretion, creating a theoretical check on the behavior of L2 operators. However, users’ ability to economically enforce such exit rights when they might most need them is quite tenuous, as any L2 operator failure or perceived compromise runs the risk of creating sudden demand for a mass exit to the L1, potentially spiking fees to the point of pricing out users with smaller balances.

MEV: An especially thorny problem that has plagued Ethereum’s base chain for many years, Maximum Extractable Value (“MEV”) is a systemic issue that drives higher end-user costs and transaction delays in ways that can be difficult to predict and control, famously giving rise to the idea of Ethereum as a “Dark Forest.” This hurdle, which prominent Ethereum R&D organization Flashbots has called “the dominant limit to scaling blockchains” and which offers enough material for its own essay, is exacerbated by the increased complexity of multifaceted L2 interactions.

The upshot here is that, regardless of the evermore complex tricks employed, blockchain-based systems face persistent, structural scaling constraints if they seek to preserve meaningful decentralization and permissionlessness (ostensibly their key differentiator). Of course, that naturally suggests that one way to mitigate some of these issues is – you guessed it – more centralization.

Right Back Where We Started

You might, for example, rely on yet another layer to clear many more transactions via explicitly trusted custodial platforms and / or payment service providers (“PSPs”) that run their own internal ledgers and highly specialized operations to intermediate user interactions while anchoring periodically to the L1 and L2s. In other words, you could solve these problems by recreating banks (just with more steps, less network effect, and no lender of last resort). Perhaps not surprisingly, this is broadly how the Ethereum ecosystem has trended, with a significant share of user activity depending in some way on custodial platforms and / or trusted intermediaries like exchanges, RPC providers, and PSPs that sit on top of L2s – which are highly centralized themselves due to capital intensity and operational overhead, with the most popular iterations running on unilaterally controlled servers.9 This improves scale and abstracts away complexity, but in exchange for diminished user recourse to the “permissionless” blockchain.10 Even in the case of something like MetaMask, the most popular Ethereum wallet which allows users to hold private keys locally, most users in practice depend on third-party infrastructure like Infura (which is notably owned by the same parent company as MetaMask) to actually submit transactions and query balances.11

From a user perspective, this shift can offer experiences that look like the familiar UX of legacy payments, but only by reintroducing the same unsecured credit exposures and institutional dependencies that enable legacy payment rails. Custodial wallets and PSPs (typically large exchanges like Coinbase and Binance or fintech custodians like PayPal) maintain their own internal ledgers of user balances which they debit and credit instantly based on transaction flows, while only reflecting the net results periodically on L1s and L2s. These arrangements open up capacity for orders of magnitude more batching while giving users fast payments, but at the cost of significant abstraction from the settlement assurances of the L1 blockchain. Effectively, users are at least depending on the permission and technical efficacy of these third parties to facilitate their transactions, and are often just transacting directly with unsecured claims on the intermediaries, the ultimate value and utility of which will depend on the solvency, ideological neutrality, risk controls, technical resilience, and goodwill of any given custodian or PSP. Beyond outright custodial relationships, the emergence of corporate middleware like Circle’s CCTP – particularly its Fast Transfer feature, which offers fee-based accelerated cross-chain transfers through Circle-controlled infrastructure – illustrates the same drift toward managed service layers that improve speed and convenience in exchange for greater trusted intermediation.

In any case, the Ethereum scaling roadmap increasingly relies on even more of this type of intermediation over time. Similarly, Solana has embraced the same scaling trade-offs even more directly, pursuing a single very high-throughput L1 with a concentrated, professional validator set that even now requires high ongoing capital commitments along with professional-grade hardware and bandwidth.12 These dynamics have pushed the number of active Solana validators steadily lower over time, and the project’s roadmap aims to continue pushing L1 capacity and technical requirements higher, even as more of the user-facing experience is delivered through platform-mediated wallets and managed service layers. Tron goes even further, building on a design of just 27 so-called “Super Representatives” that have sole discretion over processing transactions to optimize for maximum throughput from exchanges, OTC desks, and custodial wallets. Across all three dominant stablecoin ecosystems, the modal user experience is clearly converging on the same pattern: most users rely on trusted intermediaries to deliver the familiar quick payments UX of legacy systems, while those intermediaries in turn settle batched net positions as necessary on the L1s.

These kinds of highly intermediated arrangements will tend to reintroduce the potential for (intentional or unintentional) “rugpulls” of users, as well as renewed surface area for censorship, de-banking, and unequal access comparable to the legacy system. Even if all of that could be avoided, in the long run these intermediaries still won’t be able to sidestep the same kinds of upward fee pressures we’ve described elsewhere if transaction demand truly rises toward global payments scale, as the same basic on-chain constraints will eventually apply whenever L1 blockspace is persistently saturated.

But notice that even if all those conditions could be perfectly optimized by trusted third parties, such a system would likely still recreate a meaningful intermediary cost burden. An imperfect but instructive analog might be the Merchant Discount Rate (MDR) in legacy card payments, which builds in a per-swipe cost bundle that includes (among other things) the economics of fronting funds for instant authorization and managing fraud / disputes, as well as other operational functions like compliance. Assuming this hypothetical intermediated stablecoin stack aimed to preserve many of these features at scale – near-instant authorization UX (even if / when L1 and L2 rails become congested); allowance for disputes; unavoidable regulatory functions – the intermediaries would still need to perform (and be compensated for) many of the same functions intermediaries perform today, just on a different technical architecture. The overall systemic cost would therefore just be transmuted rather than eliminated, again undercutting the promise of “blockchain rails” as an inherently cheaper way to do business in practice.

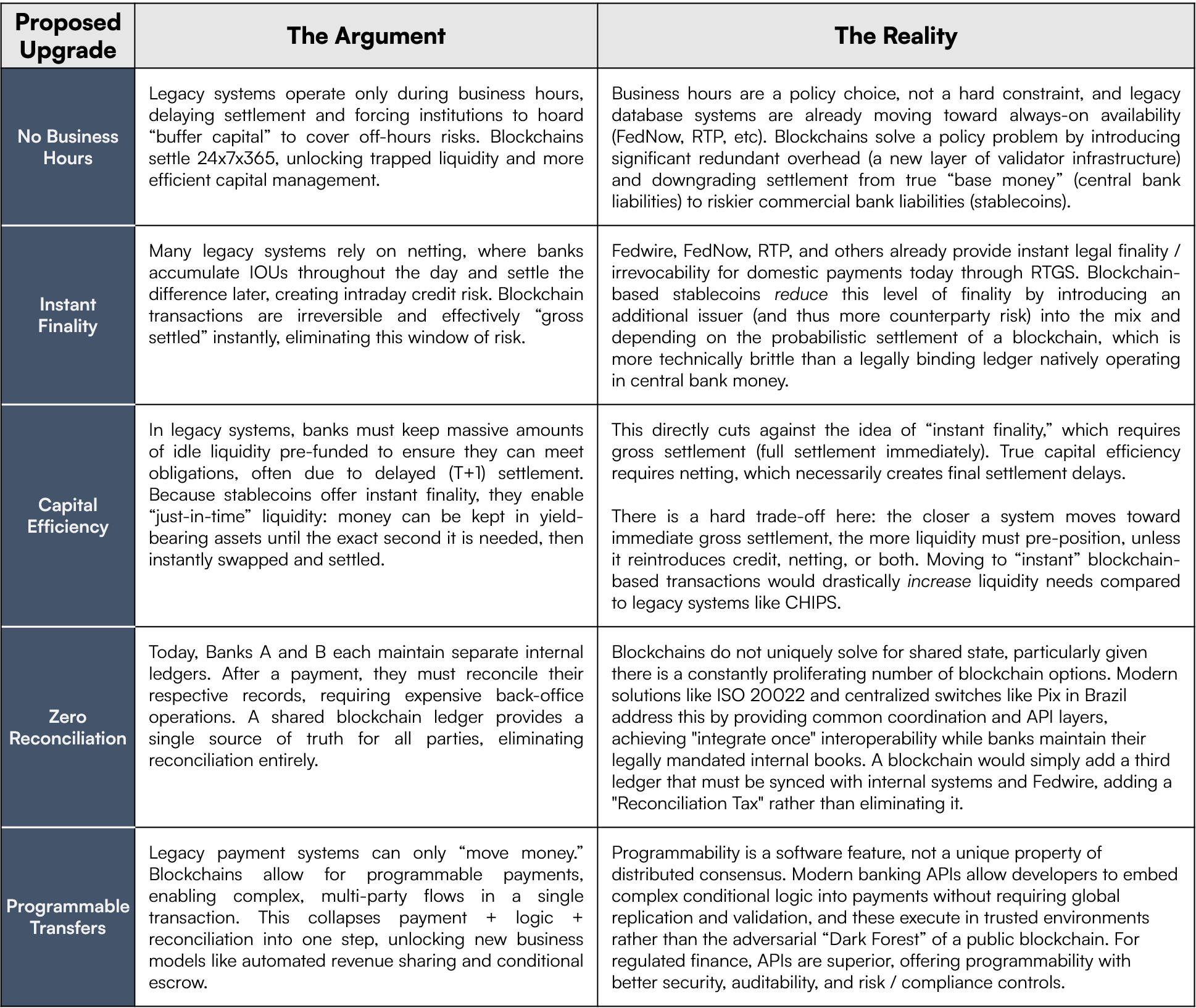

Better Banking Through Chemistry

Even if a stablecoin enthusiast granted that blockchains do carry frictions and trade-offs that will limit permissionless access at scale, and that these trade-offs are likely to drive much more intermediation if stablecoins continue to gain adoption, he might still argue that the technical foundations of these systems can drive major upgrades to the existing intermediated banking and payments stack. Legacy financial rails could no doubt benefit from more innovation, and our stablecoin interlocutor might assert that by offering bearer asset transactions available 24x7x365 on programmable, interoperable substrates, blockchain-based systems can drive upgrades to traditional rails including better UX, faster and more efficient interbank settlement, and improved cross-border payment flows. However, across all those dimensions, public blockchains are at best an unnecessary complication and at worst a net drag relative to what can already be done more efficiently with some version of a traditional database. Let’s look at each component of this argument in turn.

Some Background: Databases vs Blockchains

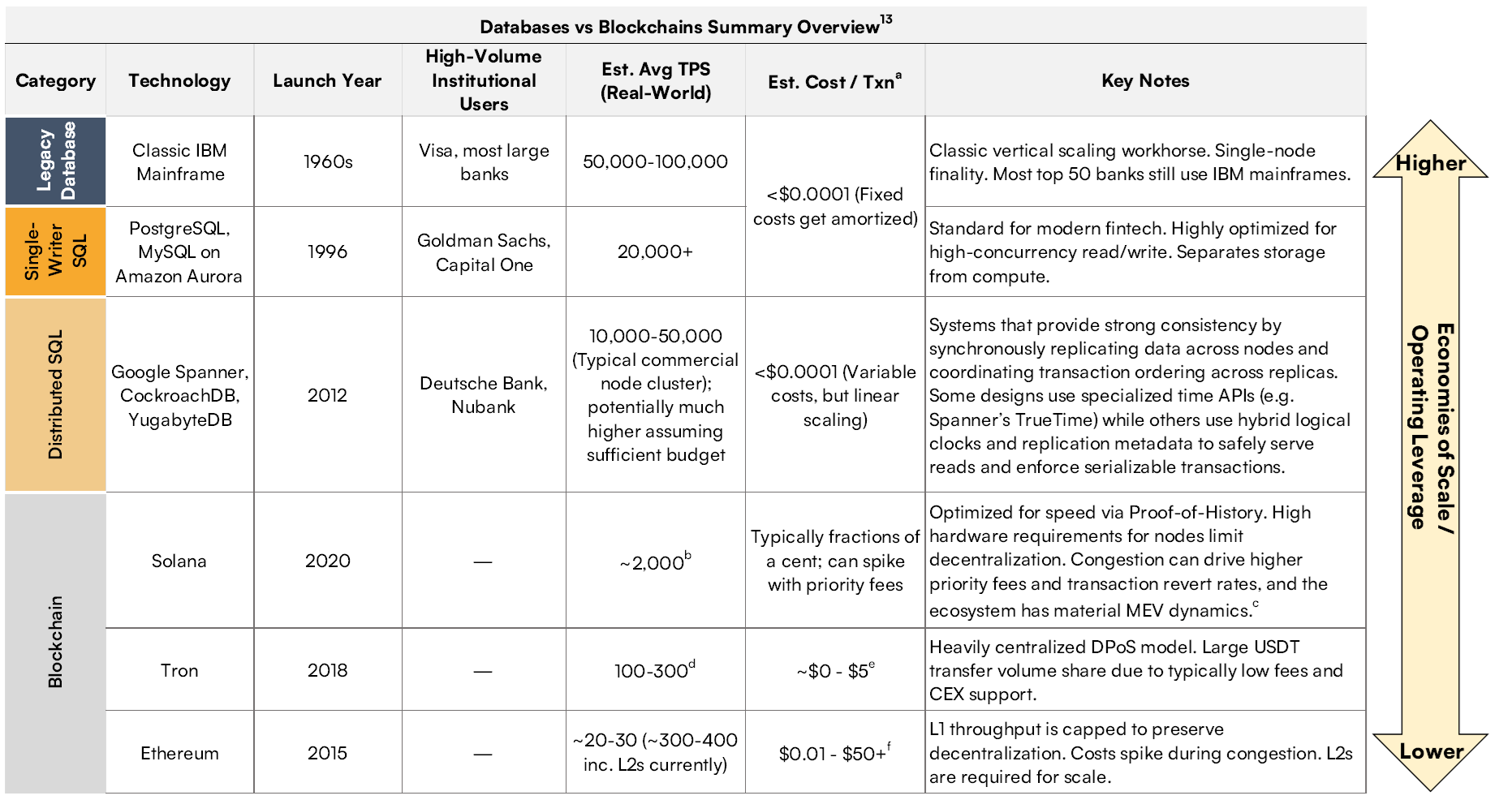

At the highest level, a database is a system for storing and updating shared state (i.e. records that multiple parties need to access and update) where a designated operator or tightly governed consortium maintains the canonical data set and authorizes proposed changes (e.g. an HR system of record, a bank’s deposit ledger, etc). Among several key differences between blockchains and traditional databases is the latter’s lack of consensus and replication across potentially adversarial parties. Unlike blockchains, databases can update and maintain state without needing an arbitrarily large set of unaffiliated and potentially adversarial parties to validate proposed changes, and without requiring such parties to replicate and persistently store the latest updates. Once a centralized intermediary is assumed – and per the discussion up to this point, blockchain-based systems prioritizing throughput and UX will inexorably trend in that direction – “moving” a digital balance from one party to another (even billions of times a day) is a trivial computational action whose efficiency would only be weighed down by unbounded external validation and replication.13

As a result, blockchains will tend to carry at least as much – and in practice, significantly more – computational and coordination overhead, degrading their technical throughput and cost-effectiveness relative to conventional databases.14 Even Solana, Tron, and other blockchains that explicitly deemphasize decentralization in favor of boosting throughput still ultimately confront a variety of fundamental challenges that limit their functionality relative to traditional databases, including: safe propagation of blocks; rapid state growth; MEV; and ultimately the same long-term fee pressure that is inevitable when a high volume of time-sensitive demand meets finite blockspace (see Appendix B for more detail on all these points).

This structural edge in database efficiency is visible both in the shift toward increasingly centralized validator sets and database-like intermediaries across much of crypto, as well as the UX already available to most users of traditional fiat payment systems in developed markets. As of early 2026, essentially all such users have access to some form of fast, easy digital payments either directly through banking apps or via platforms like Venmo, Zelle, and CashApp, which rely on simple, instant debits and credits to internal ledgers powered by conventional databases. These systems certainly have limitations and edge cases (which we’ll discuss more below), but for the average bank account holder willing to accept the risks of intermediation in exchange for convenience, stablecoins offer no inherent UX advantage over conventional databases for typical daily payments.

This is the fundamental headwind that any blockchain-based stablecoin must account for: in a world where we’re assuming continued intermediation and trusted third parties, why not just use a database?

Bearer Assets

The stablecoin enthusiast could start by objecting that, while databases may indeed be structurally more efficient on a simple per-transaction basis, blockchains offer users bearer assets (as discussed earlier, assets that can be held and used without counterparty authorization) on open networks, which are fundamentally different from claims on a closed, permissioned database like a bank ledger. Because a stablecoin transfer ostensibly constitutes the movement of an asset in itself (comparable to paying in physical cash) rather than a message to update a bank ledger (e.g. a check or wire transfer), it removes settlement risk from the equation. In the legacy paradigm, every end-user payment is really a promise for final settlement – the point at which a transaction is irrevocably complete in the ultimate settlement asset (e.g. central bank money) – that requires subsequent reconciliation, giving rise to “in-flight” credit risk and the MDR burden we mentioned earlier. But in the stablecoin paradigm, so the argument goes, the transfer is the final settlement. Therefore, even if the future is still dominated by centralized intermediaries (whether blockchain-adopting banks or totally new types of crypto-native institutions), blockchain-based stablecoins as bearer assets still provide superior backend movement of funds.

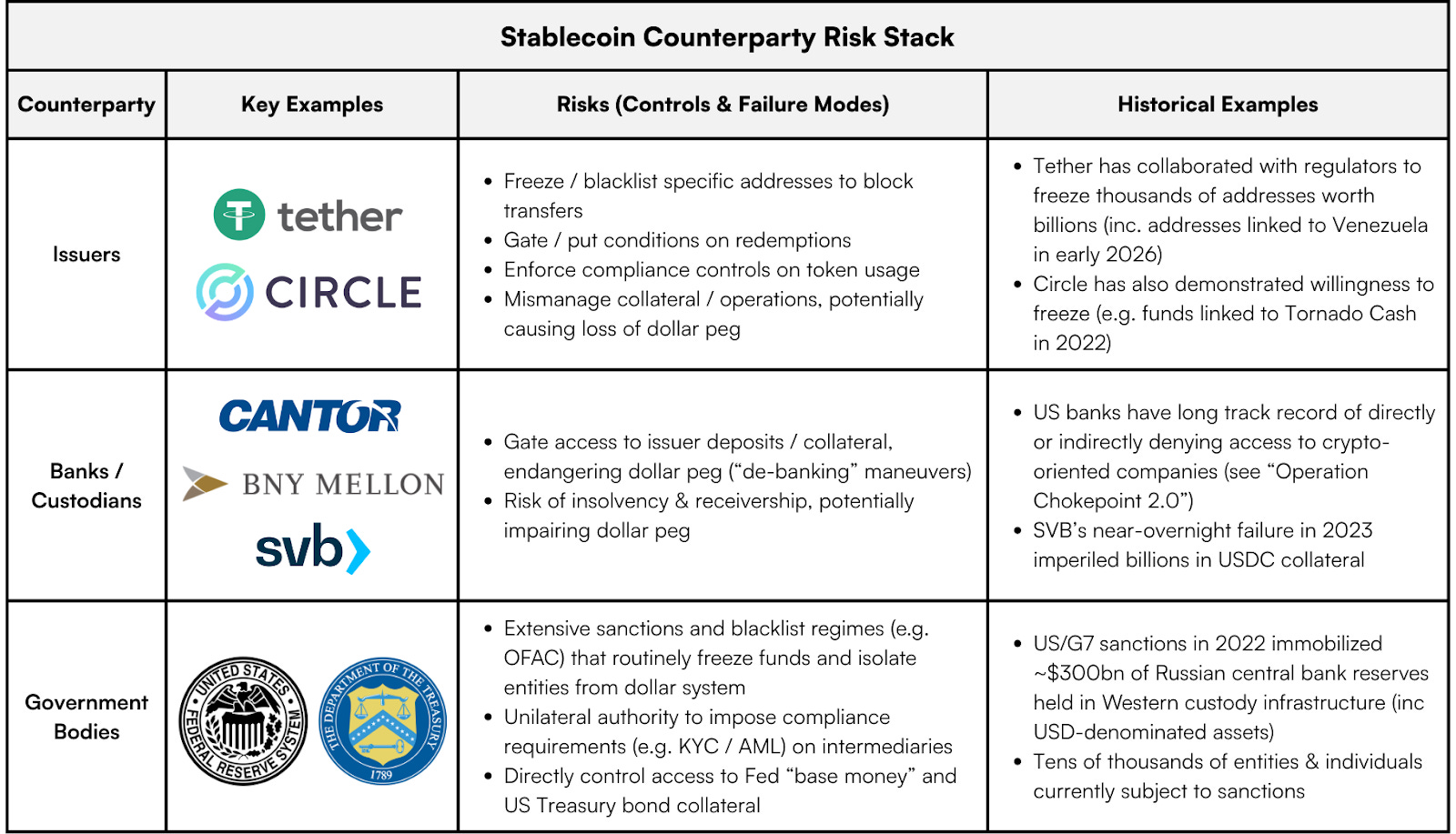

Stablecoins do have some bearer qualities: users can directly hold the private keys necessary to spend them, and in principle they can (usually) be spent without issuer authorization or involvement (though in practice, many users opt for the convenience of custodial tools rather than taking advantage of these attributes). However, stablecoins are ultimately permissioned claims on a counterparty’s reserves, and therefore lack some key characteristics that would make them truly bearer assets. Even if all of the previously discussed centralizing forces that plague blockchain ecosystems could be totally solved (no doubt a heroic assumption), individual and institutional stablecoin users would still be transacting with tokens that have at least three layers of inescapable counterparty risk: their issuers, the banks that custody those issuers’ fiat collateral, and the US government.

Unlike gold, bitcoin, or (arguably) physical cash,15 a stablecoin is someone else’s liability. A stablecoin “balance” is really just an entry in an issuer’s privately administered database, and therefore is always subject to unilateral suspension or seizure by that issuer. Through straightforward smart contract capabilities natively available to the blockchains that facilitate USDT and USDC transactions, these issuers can instantly blacklist addresses at the touch of a button, indefinitely preventing those addresses from sending their stablecoin tokens to a peer, a “DeFi” protocol, a regulated exchange, or anywhere else. These tokens can also be “burned” and reissued elsewhere entirely at the discretion of the issuer. Examples of these types of actions are numerous, encompassing thousands of addresses worth billions of dollars over just the past few years – including the very recent freeze of wallets linked to the deposed Maduro government in Venezuela – and both Tether and Circle actively promote their collaboration with law enforcement to immobilize funds as necessary. Theoretically, actually exercising these powers is more difficult if an issuer does not have sufficient “Know Your Customer” (KYC) information to isolate the tokens of a targeted user, but in practice most users are interacting with stablecoins through platforms that require KYC, and we expect surveillance of these systems to increase over time (more on this below).

Even more fundamentally, the value of these tokens depends upon access to and the continued solvency of the regulated US banking system. The starkest illustration of this dependency was the massive 2023 bank run that brought down Silicon Valley Bank, which nearly vaporized over $3 billion in Circle reserves held at SVB, potentially destroying USDC’s dollar peg if not for unprecedented intervention by the Federal Reserve. But even in less chaotic scenarios, these issuers are always subject to the permission of the banks and custodians holding the collateral that makes their tokens possible, and these institutions can in principle gate redemptions between stablecoins and fiat reserves at will. It’s important to realize here that there is no way for stablecoin issuers (or anyone else) to “self-custody” dollars or near-equivalents like Treasury bills at scale; interacting with true dollar-based collateral always requires a licensed and regulated intermediary, a reality whose drawbacks will be uncomfortably familiar to anyone who lived through the coordinated de-banking era of “Operation Chokepoint 2.0.”

The counterparty stack goes one level deeper still. The Federal Reserve and US Treasury, the issuers of the actual bedrock assets that make the whole system work, always retain final, unilateral control over the accessibility of those assets. While it may be a rare occurrence for these government agencies to adversarially flex that power, their decision to freeze $300 billion of Russian central bank reserves in 2022 was a clear message to the world that no one, not even sovereign nations, can ultimately have full bearer control over assets in this system.

Because stablecoin issuers cannot hold dollar or Treasury reserves “outside” the legacy banking and custody system, and those assets would still be subject to governmental seizure or cancellation even if they could, stablecoins are effectively just a wrapper for underlying claims on the legacy dollar system, or bank liabilities with extra steps. The most obvious result is that, contrary to much marketing hype, there is a hard limit on the bearer properties of stablecoins for individual use: they can be held in self-custody, but – in stark contrast to bearer assets like gold, bitcoin, or physical cash – their usability ultimately remains revocable via issuer controls and regulated chokepoints. This same reality also has significant implications for claims about stablecoins’ superior interbank settlement capabilities that we will address further below.

24x7 Availability

Though stablecoins may not be bearer assets in the fullest sense, an advocate might still argue that they benefit from transactions facilitated through the persistent, always-on rail of a blockchain. Whereas legacy financial systems are gated by traditional business hours, which constitute only ~25% of total hours in a year, blockchains run 24x7x365 and can therefore offer institutions materially smoother, faster transfers and much greater surface area for commerce.

But putting aside the long history of blockchain outages and downtime we mentioned earlier, blockchains don’t actually offer any unique uptime improvements relative to traditional database-like systems. It’s absolutely true that traditional systems have generally been ringfenced by business hours, but the last decade has seen the emergence of many highly performant systems offering true 24x7x365 final settlement of tens of trillions in annual volume, including the Federal Reserve’s FedNow, The Clearing House’s Real Time Payments (“RTP”) network, and various systems abroad including Pix in Brazil, RT1 and TIPS in Europe, and FPS in the UK.16 These always-on networks provide instant settlement of both consumer-grade payments and larger transfers worth millions of dollars, with the maximum limit headed higher over time. Even Fedwire, the Fed’s century-old real-time gross settlement (“RTGS”) system which facilitates over $1 quadrillion per year of instant final settlement for large interbank transfers, already operates on a “22x5” (22 hours, 5 days per week) schedule and is laying the groundwork for 24x7 availability.

Meanwhile, adoption is growing rapidly, with near-universal instant payments coverage in the UK and Europe, and 80% of US banks expected to support receiving instant payments by 2028.17 Crucially, because these networks do not have to contend with scarce blockspace or data availability budgets – which will always exist somewhere in a blockchain-based payments stack – and their state updates (i.e. transactions) amount to proprietary database changes that do not require global consensus and replication, they benefit from near-zero marginal transaction costs that do not meaningfully scale with transaction demand, unlike blockchains. While far from perfect, the rapid growth of these database-oriented systems illustrates that there is nothing about blockchains as such that uniquely enables always-on instant settlement. Ultimately, 24x7 availability is a policy question, not a technical one, and it can be readily and more efficiently accommodated with traditional database technology.

Programmability

Another key feature stablecoin advocates may tout is programmability, or a stablecoin token’s capacity to express complex, composable transaction logic that can be tailored to many different situations. Through blockchain-based smart contracts, stablecoins can potentially facilitate consumer use cases like programmatically split payments (e.g. for multi-party revenue sharing) or institutional applications like automated treasury management and sophisticated multi-asset settlement processes.

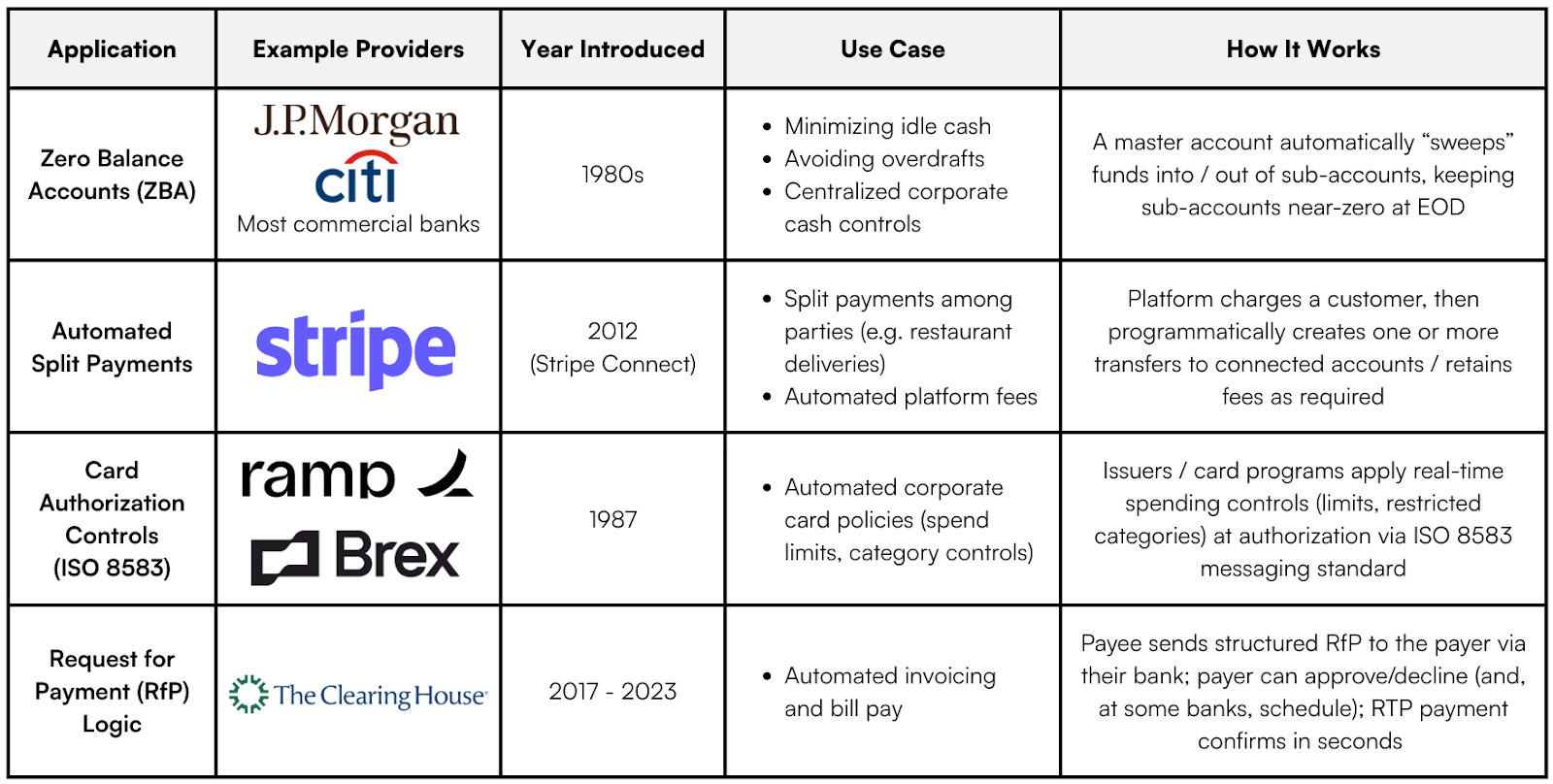

While we won’t address every possible application here, the first key intuition to highlight is that, just like with 24x7 liveliness, programmability is not a unique feature of blockchains, but is common to any software (e.g. a sophisticated database) that can automatically execute commands based on conditional logic. In fact, a wide variety of financial applications leveraging the programmable capabilities of high-powered databases have already been deployed in the wild, including:18

We could list many more, but the upshot should be clear: you don’t need global consensus to execute a conditional payment. Granular programmability of financial interaction has been available for decades, is getting more sophisticated over time, and does not depend on the use of a blockchain.

Furthermore, it’s not obvious that the programmability blockchains offer is even really desirable for deployment in many commercial environments. First off, any system attempting to leverage blockchains for these kinds of use cases faces the key cost-efficiency dilemma we outlined above: the marginal cost of executing any of this logic through a standard SQL database script is near-zero, whereas the per-use cost of running the same operation on a blockchain is structurally orders of magnitude higher even before considering the long-term upward fee pressure we’ve discussed. Just as importantly, blockchains’ programmable features are made possible through a delicate balance of code and incentives that adds wide surface area for edge case smart contract failure resulting in permanent loss of funds. This complexity has driven billions in losses through purely technical failures or exploits over the last several years, and that’s before even considering the impact of dynamics like MEV. Whereas the programmable transactions enabled by modern databases both sidestep all this complexity and preserve reversibility in case of error, the “programmability” that blockchain advocates promote – particularly when coupled with the irreversibility that is supposedly a selling point for blockchains – often masks a degree of technical brittleness inappropriate for high-volume global commerce and / or sensitive corporate functions like treasury management.

Interoperability

Perhaps the most compelling proposed attribute of stablecoins is the potential for greater interoperability relative to existing rails: a single, open-source standard for value transfer that replaces the fragmented landscape of legacy banking APIs. Advocates like a16z’s Chris Dixon argue that in the current system, financial innovation is stifled by gated infrastructure – to build a fintech app, a company must slowly work out individual business development deals and integrate with bespoke, proprietary bank ledgers. In the blockchain paradigm, however, the financial rail is public infrastructure. Just as a developer or company can freely “plug into” the unified open standards of the internet for information transfer, they can now plug into open standards like Ethereum’s ERC-20 for money transfer. Similarly, Circle’s Jeremy Allaire envisions this as an “Economic Operating System,” where “internet-native protocols” standardize global settlement the way SMTP standardized email. Banks, financial institutions, and companies in general can “Integrate Once” with a blockchain, instantly connecting them to the entire global economy.

This vision of a unified banking backend built on blockchains is unfortunately riddled with issues and questionable assumptions. In the first place, as with 24x7 availability, shared standards are ultimately just a question of policy and coordination, not a unique property of blockchains. The legacy banking industry has already made real strides toward interoperability through new protocols like ISO 20022, a common messaging standard recently adopted by SWIFT, Fedwire, and CHIPS. This standard unifies inter-institution payment communication without the overhead of a decentralized consensus mechanism and is now used in more than 90% of high-value global payments. Brazil’s Pix system – broadly adopted in the country in just three years – takes this even further, offering domestic financial institutions and individuals a unified “shared state” with standardized rules to which they can interoperably connect. After first connecting with Pix, any individual or institution in Brazil is instantly reachable by any other bank account in the country without any bespoke bilateral integrations, effectively fulfilling the slick “integrate once” vision promoted by the stablecoin industry. Crucially, all this is facilitated directly by Brazil’s central bank with traditional database infrastructure, the diametric opposite of the approach advocated by most stablecoin promoters. This no doubt hands over more control to the central bank, but as we discussed earlier, fiat stablecoins built on central bank collateral don’t fix that; they just add even more layers of counterparty risk and technical overhead.

That said, while Pix was rolled out quickly, wholesale industry cutovers like these can take time, and ISO 20022 was famously delayed in its broad implementation, so a stablecoin advocate could argue that plugging into a public blockchain allows banks, fintechs, and corporations to sidestep potentially long rollout periods they can’t control and just start working on a shared standard right away. However, as discussed elsewhere, the claim that blockchain-based stablecoins offer a “single standard” is misleading at best, as the blockchain landscape is arguably even more fragmented than the banking system that advocates seek to replace. “Integrating once” is impossible in an environment where liquidity is splintered across dozens of incompatible Layer 1 blockchains and Layer 2 rollups, each requiring its own bridge infrastructure and security assumptions. Furthermore, stablecoin standards themselves are not universal, as a USDT token on Ethereum is legally and technically distinct from a USDT token on Solana, and smart contract implementations vary wildly in their upgradability and risk parameters. The result is just a fractal of new silos rather than a unified rail.

But even if we grant the possibility of a single blockchain standard, the “Universal Rail” thesis fails because it ignores the legal and operational reality of banking. For one thing, blockchain tokens are relatively data-poor by design. While the ISO 20022 standard carries rich metadata needed to facilitate complex institutional finance (invoice numbers, tax codes, remittance details, and 800+ other fields), an ERC-20 token transfer by itself carries only the barest “To, From, and Amount” data. To make these tokens useful for business, institutions would either need to leverage complex on-chain smart contracts, which reintroduces the problem of standardization (there is no universally accepted, ISO-equivalent standard for blockchain payment metadata); adds new privacy concerns around sensitive business details that would need to be disclosed on-chain (e.g. pricing, payment terms, etc); and significantly increases the cost of using these tokens relative to the near-zero marginal cost of ISO 20022 messaging (see Appendix B for more detail). More realistically, institutions would likely just build off-chain indexing layers to re-attach and handle this context, but this also clearly cuts against the ostensible cost, simplicity, and coordination advantages of the interoperable rail.

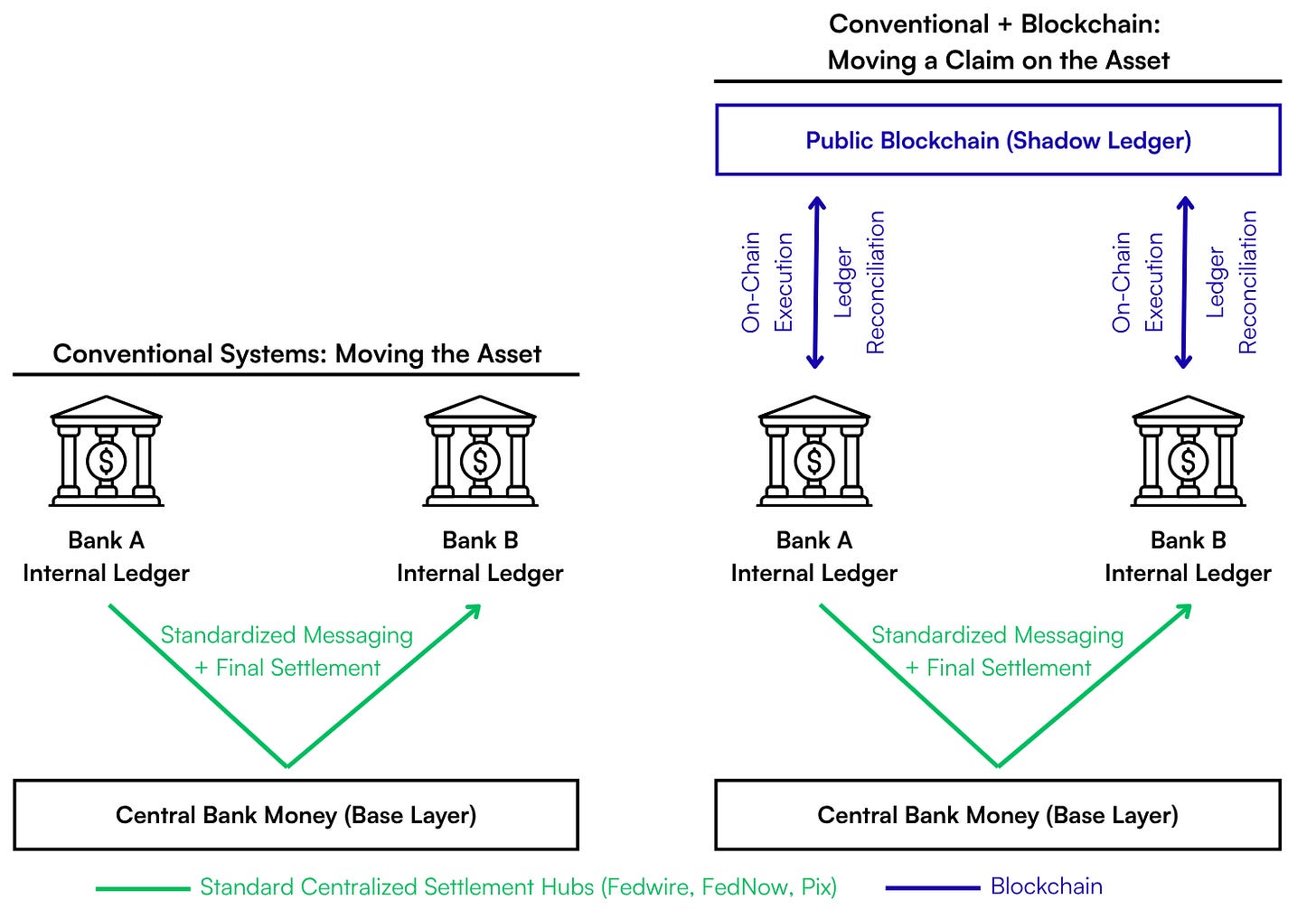

On an even more basic level, a shared blockchain does not replace the need for internal reconciliation, but rather multiplies it. Banks are legally and commercially required to maintain their own internal ledgers – which map ultimately to “base layer” central bank money – as the definitive source of truth for operations, regulatory reporting, and risk management. Adopting a blockchain does not delete these private books; it merely adds a new layer (effectively a shadow ledger) that must be constantly synchronized with them. Rather than simplifying the stack, this creates a permanent reconciliation tax where institutions must manage a more complex system with a new set of accounting entries, forcing them to bridge the gap between their legally binding internal records and a slower, more expensive, and technically brittle source of truth. As long as central bank money remains the ultimate, bedrock base layer of the system – and commercial banks the first-tier intermediator of that substrate – blockchains are net additive to the system’s complexity.

All of this brings us to a fundamental point: with blockchain-based stablecoins, the map is not the territory. Unlike true final-settlement systems such as Fedwire or bitcoin, both of which have their own native, endogenous settlement units that make no reference to anything external to the system (central bank money for Fedwire, sats for the bitcoin network), a blockchain-based stablecoin depends on reference to assets — as well as custody, redemption, and legal mechanics — that exist outside the settlement rail on which it moves. These tokens may offer a useful “map” of the dollar system in some contexts, but absent the Federal Reserve and US Treasury replacing their settlement and collateral infrastructure with a blockchain, they can never be the “territory” of the dollar itself, only an extra representational layer on top of it.

Better Interbank Settlement?

Having delved into the key attributes that supposedly make blockchain-based stablecoins attractive, we can reason through some of the key ways stablecoins are supposed to upgrade legacy interbank settlement:

Given these structural limitations, it is unsurprising that the much-touted “institutional adoption” of stablecoins has largely avoided the public blockchain vision entirely. Instead, projects like JPM Coin or Citi’s Regulated Liability Network are effectively private walled gardens: databases that are nominally “distributed” but are controlled by a single entity or consortium, indistinguishable in practice from a reskinned version of the existing banking system. Even pilots that technically utilize public chains like BlackRock’s BUIDL are so heavily permissioned and whitelisted that they function more as intranets than open financial networks. While advocates may retreat to theoretical use cases like “Atomic DvP” or “Collateral Mobility” to justify the technology, these rely on the widespread existence of tokenized securities (“Real-World Assets”) which do not exist at commercial scale today. Even if they did, all the previously discussed constraints and trade-offs mean that these use cases would at most support tightly governed institutional ledgers for narrowly defined workflows, not public blockchains as open global payment rails.

Upgraded Cross-Border Flows?

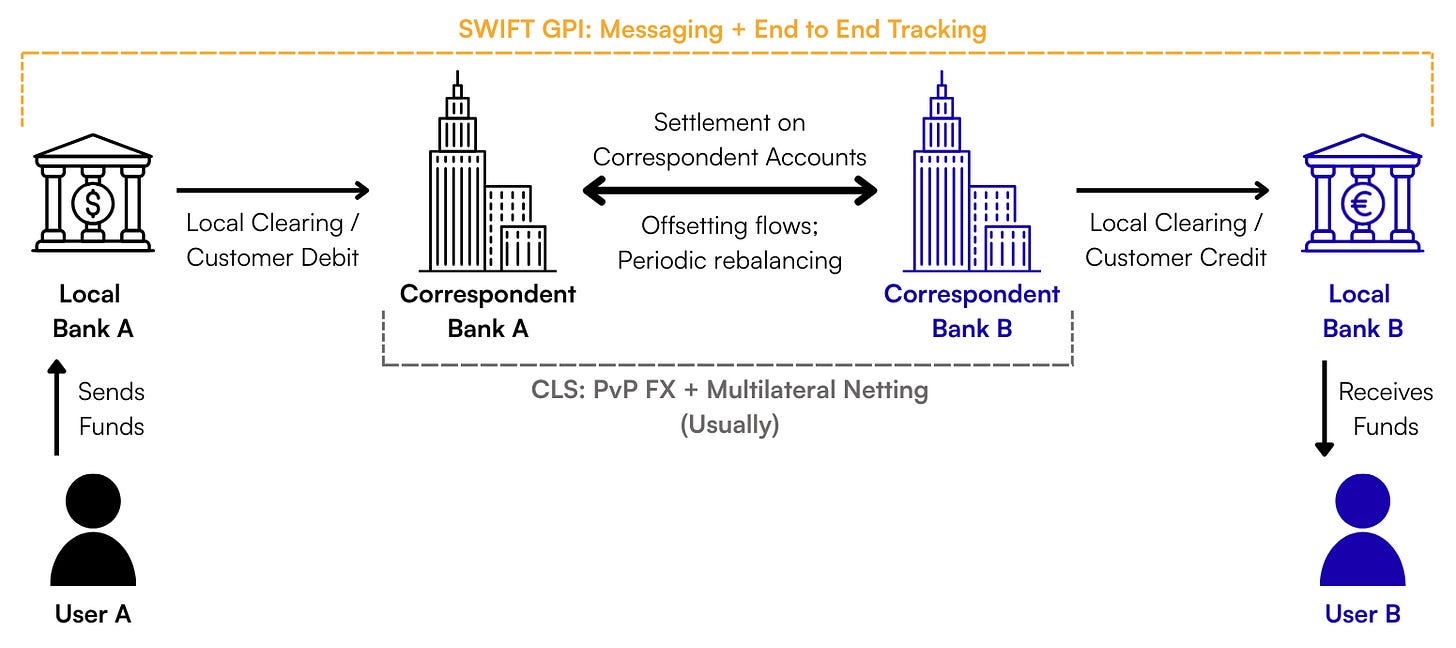

While domestic banking may see little to no benefit from blockchains, advocates may argue that blockchain-based stablecoins can totally rearchitect cross-border flows by eliminating the chain of correspondent banks that add friction and fees to every international payment. In the legacy model, sending money from a local bank in the United States to a supplier in another country involves a chain of 3-5 banks and intermediaries:

Each “hop” in this chain adds delays, opacity, and settlement risk. The most notorious danger in this system is “Herstatt Risk,” or the possibility that one party in a cross-border payment fails after receiving funds but before paying out its leg of the transaction, leaving counterparties exposed to losses. Stablecoin proponents argue that a blockchain can solve this issue by “teleporting” value directly from sender to receiver, achieving near-instant final settlement that eliminates these hops and risks outright. There are a few ways that this structure could play out, but on closer inspection, each relies on assumptions that struggle under real-world constraints at scale.

The smoothest version of this upgraded flow effectively assumes a dollar stablecoin “circular economy” where the Sender and Receiver are both able to freely use the stablecoin for anything. In this vision, a supplier in a non-dollar jurisdiction can simply stay in USDT or USDC for their local spending needs, allowing for a frictionless “teleportation” flow: USDC Sender → Blockchain → USDC Receiver. However, while the US government may want to use stablecoins to spread dollar hegemony (we’ll address this more below), the world is not close to fully dollarized. Realistically, the vast majority of users in international markets cannot live natively on a dollar standard; they must convert to local fiat to pay taxes, living expenses, wages, etc. This dynamic immediately reintroduces at least one intermediated step back into the mix: USDC Sender → Blockchain → USDC Receiver → Local Bank/Exchange for conversion. If the sender also starts in a non-dollar currency, a conversion step is required on the front end as well. Once you add these converting exchanges and market makers into the mix, you begin to reintroduce the same settlement risks, credit exposures, and delays the system was supposed to eliminate.

To avoid reintroducing such intermediaries, you would need to see the rapid dollarization of many global economies, a phenomenon local governments will likely be both eager and able to limit given, as we established earlier, the vast majority of stablecoin flows are facilitated by centralized intermediaries. However, if we grant that demand for dollar exposure in unstable regimes is high and that users will find ways to adapt, we must ask: what would a scaled version of this flow actually look like in practice?

To the extent a “dollarize the world with stablecoins” strategy works, it will inevitably be highly regulated and surveilled at both endpoints. There is no scenario where the U.S. government allows trillions in “permissionless” dollar-based flows in and out of the country to unidentified actors. Because these tokens explicitly depend upon access to permissioned central bank and Treasury collateral, the U.S. government will have all the leverage it needs to enforce full KYC and whitelist/blacklist controls via issuers and their regulated partners (collectively, Virtual Asset Service Providers, or “VASPs”). The resulting flow would therefore look something like: USDC Sender → Regulated VASP A → Blockchain → Regulated VASP B → USDC Receiver. This essentially recreates the old correspondent flow, just with a different set of actors. Crucially, this will tend to encourage just as much international fragmentation as currently exists under SWIFT; large swaths of the global economy (e.g., adversarial nations like China, Russia, or Iran) will almost certainly be ineligible to join this emergent “VASP network,” leaving the geopolitical silos of the legacy system intact.

An advocate might object that, even if you end up with a similar intermediated flow, this arrangement is still preferable to the status quo because it substitutes instant final settlement (once a VASP broadcasts to the blockchain) for the much longer settlement windows of the legacy system, solving the Herstatt Risk mentioned earlier. However, the legacy banking system effectively already solved this risk decades ago without blockchains. Following the 1974 collapse of Bankhaus Herstatt – the source of a systemic panic which gave rise to the term – the industry eventually established Continuous Linked Settlement (CLS) in 2002. CLS enables “Payment-versus-Payment” (PvP) settlement, ensuring that both legs of a currency trade either settle simultaneously or not at all, thereby eliminating principal risk (crypto-native readers may know this concept as “atomicity”). CLS now settles over $7 trillion daily with same-day finality in zero-risk, “base layer” central bank money, all without the scaling challenges and technical risks of a blockchain.

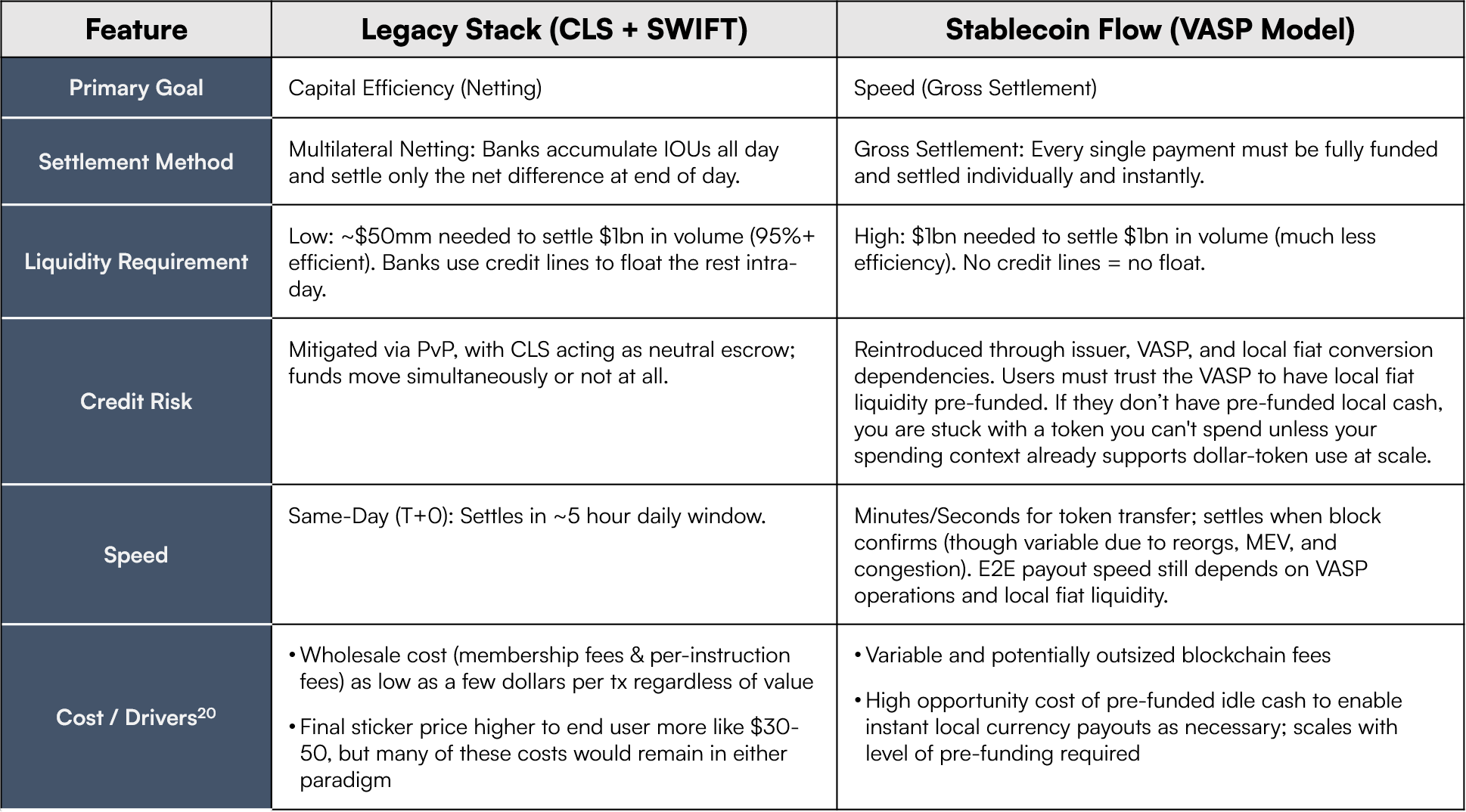

While CLS is not technically “instant” (all transactions settle same-day, but within a ~5 hour window), that’s ultimately a function of its netting architecture; if you want true “instant” final settlement, you have to move to a gross settlement model. This brings us to the final key trade-off for the type of system under consideration: capital efficiency. Legacy systems like CLS utilize multilateral netting, where banks only need to settle the net difference between their incoming and outgoing payments. This reduces liquidity requirements by over 95%, allowing trillions in value to move with relatively little trapped capital.19 Meanwhile, as we’ve discussed, blockchains operate on a gross settlement system: every transaction must be fully pre-funded. If a VASP wants to send $1 million in USDC across borders “instantly” on-chain, they must have the full $1 million USDC on hand immediately to effect the transaction. To replicate global trade volumes with blockchains, VASPs or similar intermediaries (the new “correspondent banks”) would have to lock up massive amounts of capital in pre-funded wallets across fragmented chains and exchanges. This working capital burden is effectively a new wholesale system cost that replaces the old SWIFT / CLI fees – at many multiples of the legacy system cost20 – and it will either be passed on to end-users or encourage a return to more capital-efficient off-chain netting, neutralizing one of the ostensible key benefits of using the blockchain in the first place.

Finally, even if we assume intermediaries are willing to bear this capital cost (or that users are willing to subsidize it), we run into the final fundamental issue: as with the other use cases we’ve discussed, you simply don’t need a blockchain for this. This is perfectly illustrated by the emergence of the Circle Payments Network (CPN), Circle’s purpose-built solution for cross-border flows. This overlay has enabled a variety of positive case studies advocates might cite as evidence that stablecoins are already improving cross-border flows in the wild, including Alfred’s local BRL payouts via Pix, Tazapay’s local-currency bank deposits in Asia, and Conduit’s use of CPN to accelerate multi-corridor routing. However, the common pattern across these examples is not the unique and indispensable advantages of a public blockchain rail, but rather the managed service layers coordinated by Circle and similar operators to handle compliance, FX, business logic, and local banking integrations. Case studies like these may reflect genuine improvements in these specific corridors relative to the status quo, but in practice they work mainly by wrapping stablecoins around existing domestic rails and institutional infrastructure rather than sidestepping them with a disintermediated public blockchain.

While CPN technically broadcasts transactions on blockchains today, it is structurally a system of internal transfers orchestrated by Circle, with transactions executed by whitelisted participants under Circle’s rules, conditions that dilute the benefits of a blockchain in the first place. As long as Circle has ultimate control over participation in the network and transaction finality, there is no technical reason that transferring USDC claims between whitelisted CPN participants would be faster, cheaper, or more reliable on a public blockchain than on a private, Circle-managed SQL database, especially at the long-term volumes advocates expect. We’ll discuss this more below, but as issuers like Circle move closer to this closed-loop model to solve for speed and compliance, they inadvertently prove the thesis: the “upgrade” stablecoins might offer here is not fundamentally the result of a blockchain rail.

A Ledger Is All You Need

Netting it out, we now have a pretty comprehensive case that the much-promoted vision for blockchain-based stablecoins suffers from serious scaling limitations and misguided design assumptions. But all this still leaves us with a key unanswered question: if the current incarnation of stablecoins is so unsound, how have stablecoins grown to account for – as many headlines suggest – volumes that rival major card networks? If the architecture has so many limitations, why have stablecoins apparently achieved such clear product-market fit?

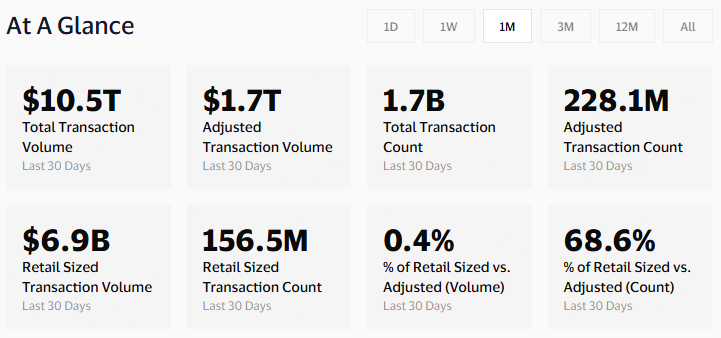

In the first place, it’s important to consider some context underpinning claims about stablecoins’ volume. Headlines touting volume already eclipsing Visa are often severely distorted by flows like bot activity, internal exchange transfers, and automated trading strategies that don’t look anything like traditional retail payments or institutional settlement. Crucially, these types of flows (e.g. a single bot spamming thousands of tiny identical trades to a single smart contract) tend to be much more homogeneous and optimizable than real-world retail payments with more heterogeneous characteristics, making them far easier to scale.21 When adjusting for confounds like these, data provided by Visa and analytics firm Allium Labs show that only 10-20% of total on-chain stablecoin volume reflects organic economic transfers comparable to real-world payment and settlement flows, with retail-sized flows comprising a negligible portion of total activity by both volume and transaction count. Meanwhile, the effective realized “TPS” for organic payments is something like 50-100 across all public chains over the past year (as opposed to 50,000+ for legacy networks built on databases).

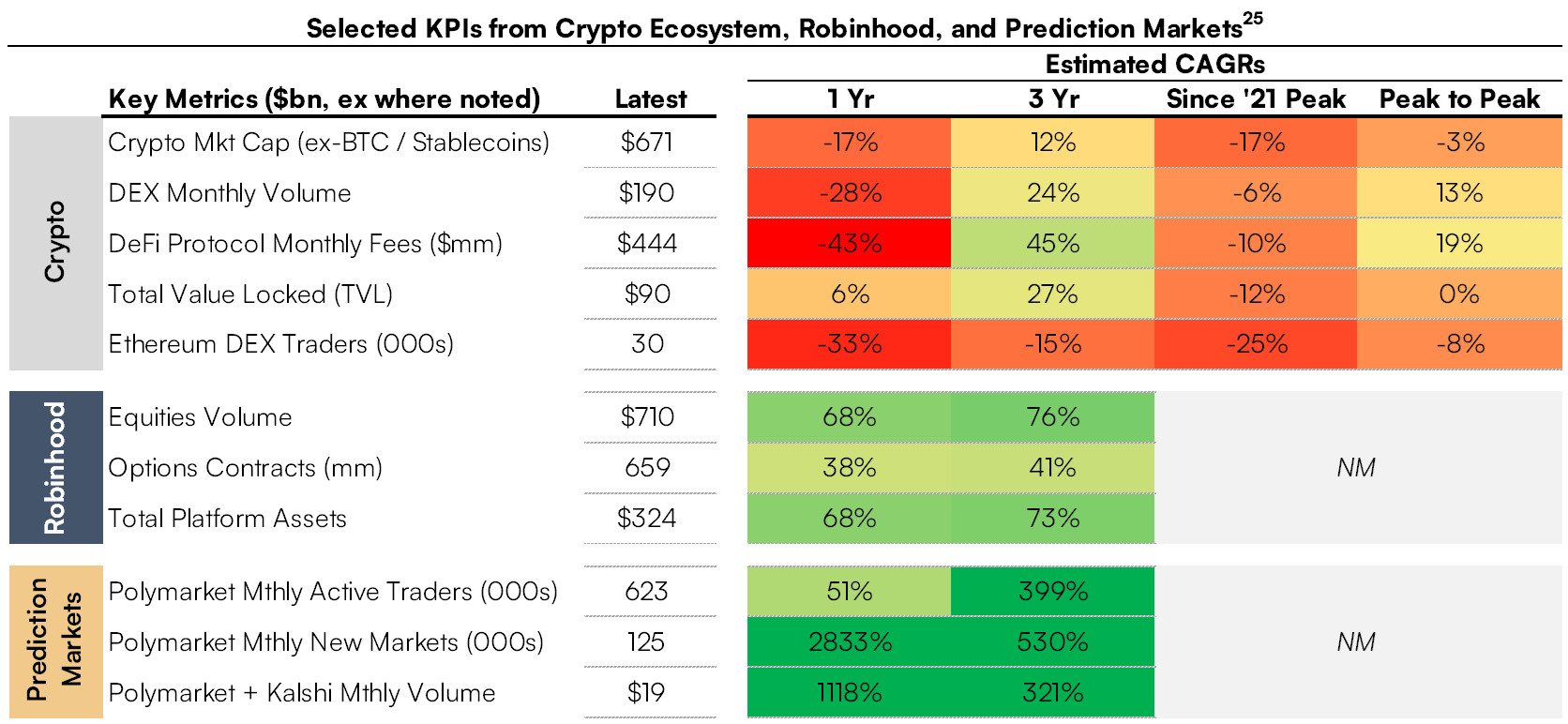

But even leaving those nuances aside, it’s clear that stablecoin use has grown substantially and impressively over the last five years, with the total stablecoin market cap ballooning from just ~$10 billion in 2020 to as high as ~$300 billion by the end of 2025. Despite all the limitations of blockchains and compromises required to make these systems work, there is obviously organic demand to use dollar stablecoins, especially in markets outside the US where stablecoin uptake is growing rapidly. Stablecoin advocates could concede all the arguments we’ve made so far, but then fairly point to this growing usage as a sign that we’re missing the forest for the trees: the demonstrable fact is that the US dollar, for all its faults, is the cleanest of the dirty fiat shirts, and virtually every international user would prefer it over his local, inferior government currency. As a result, they might say, it’s misguided to compare stablecoins to legacy banking rails, as the key target demographic of emerging market users have historically had limited access to that system anyway. Rather, we should acknowledge that stablecoins represent a novel, step-function improvement in such users’ access to the dollar system, and furthermore we should recognize that ongoing demand for US dollar exposure will likely support significant growth of global stablecoin use over time.

We’re sympathetic to much of this thinking, and as we acknowledged upfront, the potential benefits of this trend are not lost on key officials like Treasury Secretary Scott Bessent. Of course, it’s worth asking whether the total balance sheet capacity of this cohort of emerging markets users – perhaps ~$5 trillion in cash-like wealth that could be readily dollarized22 – is really significant enough to meaningfully defease the US government’s bloated and growing debt burden. Moreover, the recent pace of uptake has been insufficient for the task at hand, as stablecoins have only added ~$100 billion in market cap since the Trump administration returned to office; impressive, but a drop in the bucket relative to the $2 trillion of incremental capacity the Treasury is ostensibly targeting over the next several years (particularly since stablecoins outstanding have gone the wrong way since October 2025’s crypto crash).

But either way, notice that in this framework, the true essence of what stablecoin users value is access to synthetic dollar claims, not decentralized consensus (or technical theater gesturing in that direction). Users want low-friction digital tokens that give them the ability to make dollar-denominated transactions with a unit also used and accepted by those they would be likely to pay (e.g. peers, local merchants, employees, etc). As we’ve shown up to this point, nothing about that use case implies or benefits from the facilitation of a blockchain: all that’s needed is a highly performant digital ledger with a sufficiently large network effect to support broad spending capabilities. The growing product-market fit of stablecoins fundamentally comes from participation in digital dollar ledgers maintained by specific credible issuers, not from “blockchain technology.”

To the extent that US financial regulators don’t want to encourage this product-market fit – as was very clearly the case during the last Presidential administration – they could neuter the entire ecosystem overnight given, as discussed, these tokens are entirely dependent upon access to regulated US dollar collateral. On the flipside, if US officials are hoping to encourage uptake of stablecoins to spread dollar hegemony – which seems to be the case for the moment given the recent passage of the GENIUS Act – public blockchains are neither necessary nor optimal to accomplish that goal. Indeed, that legislation, hailed as a landmark bill by many in the stablecoin industry, carves out no special role for and does not mandate reliance on incumbent public blockchains.23 The bill defines stablecoins not by their technological architecture, but simply by their function: digital assets redeemable at par and backed 1:1 by high-quality liquid collateral like US Treasuries. “Blockchain technology” as commonly understood is incidental to the operation of both “grey market” and GENIUS-compliant stablecoins, and all the incentives skew toward the ultimate abandonment of public blockchains for this use case.

Drop the Blockchain; It’s Cleaner

If the future of the digital dollar does not depend on public blockchains, what might it ultimately look like? Here we admittedly drift into the realm of the speculative, but fortunately, several recent updates from the key players in the space offer some significant clues as to the direction of travel.

The most obvious signal is the industry’s recent embrace of private, proprietary infrastructure. In the past year, both Circle and fintech giant Stripe have announced their own permissioned blockchains (called Arc and Tempo, respectively), both of which rely on a closed consortium of approved institutional validators to facilitate transactions. While these systems are billed as “blockchains,” their recordkeeping and state change updates depend on Byzantine Fault-Tolerant consensus engines shared among a small group of whitelisted actors to maintain coordination, a design choice that effectively mirrors a conventional distributed database, discarding the global, adversarial validation of public blockchains in favor of speed and control.24 Meanwhile, though both systems are compatible with the “Ethereum Virtual Machine” (EVM) and can interoperate with the Ethereum ecosystem, both also rely on stablecoins paid to each system’s consortium for transaction fees (rather than native blockchain tokens like ETH or SOL paid to Ethereum / Solana validators), moving value capture away from public blockchain infrastructure and toward the operators of these closed systems.

These new private “blockchains” are clearly laying the groundwork for greater vertical integration around the stablecoin payments use case – Arc natively integrates with the previously mentioned Circle Payments Network, while Tempo is specifically optimized for fast payments that plug into Stripe’s emerging closed loop stack that leverages its recent acquisitions Privy and Bridge – shifting these players further away from dependency on public blockchain uptime, congestion, roadmaps, and fees. Over the long run, we would expect even the “blockchain” branding of these private systems to be dropped. As we’ve seen throughout this essay, once a system is governed by a central operator or permissioned consortium, it is functionally indistinguishable from a walled garden database, and any invocation of a blockchain amounts to, as crypto analyst Omid Malekan puts it, a dictator holding “elections” where he is the only candidate.

Though leading stablecoin issuer Tether has not announced its own proprietary blockchain, its recent actions suggest a similar trajectory toward vertical integration that can ultimately reduce dependence on public blockchains. Last year, Tether launched Wallet Development Kit (WDK), an open source software toolkit designed to allow app developers and ecosystem participants to build and use cryptocurrency wallets supporting USDT easily. It’s still very early, but this rollout could present a phase shift in Tether’s relationship to its users: until now, Tether has left all UX choices in the hands of third-party developers and blockchain ecosystems, never directly touching real-world applications (except to freeze funds when necessary). By contrast, WDK embeds Tether in a direct relationship with its end users, and its toolkit puts in place the critical primitives Tether would need to begin controlling much more of the stablecoin payments stack from the edges.

More specifically, WDK leverages the concept of “Account Abstraction” to separate a user’s account and experience from the underlying mechanism of transaction facilitation and fee payment. Echoing the choices of Arc and Tempo, this allows users to pay transaction fees in tokens (e.g. USDT) not native to an underlying blockchain, enabling “gasless transactions” from a user perspective and opening up a smoother, more predictable payment UX. WDK also includes support for “Paymasters,” or third parties (Tether or its partners) that actually handle and can freely subsidize the underlying fees paid to blockchain operators. The architecture is designed to be ecosystem-agnostic, with support for a wide variety of transfer rails including Bitcoin, Ethereum, Tron, Solana, and even the Lightning Network, with WDK providing the dynamic routing logic to determine the best means of facilitating any given payment. Developers build against the WDK API (rather than any particular blockchain SDK), giving Tether control over how funds sent from WDK-based endpoints are ultimately transferred.

Collectively, this architecture totally abstracts the payment rail from both users and developers. For now, this all plays nicely with the major existing ecosystems, and transactions will still be facilitated on public blockchains (which also means that, even if users are paying fees in USDT, blockchain gas fees are eventually being paid to validators by someone in the flow). But it sets in motion a future where, in a world of many WDK-based endpoints, Tether could easily introduce “WDK-to-WDK” payments, whereby if User A and User B are both using WDK-based wallets, any transfers between them happen instantly and for near-zero cost. Instead of finding the cheapest transfer option across an array of public blockchains and paying gas to external validators, the WDK toolkit could just trigger a simple, instant database update to Tether’s master ledger (or some kind of overlay network controlled by Tether). In this scenario, public blockchains would be gradually demoted from core settlement rails to vestigial plumbing that can be swapped out or ultimately bypassed at will as more economic throughput occurs on Tether’s optimized, proprietary stack.

To be sure, there’s no guarantee WDK will gain enough traction to support such a shift toward material vertical integration for Tether, as the history of open source software is littered with SDK projects that achieved minimal real-world traction. However, Tether is in a unique position to incentivize WDK uptake in a way no venture-backed startup or cash-strapped foundation could ever imagine. As the most profitable company in the world per employee with over $10 billion in annual free cash flow (as well as a massive balance sheet at its disposal), Tether benefits from a huge budget that could be used to aggressively subsidize fees on WDK wallets for many years. If structured appropriately, this could amount to a trivial customer acquisition cost relative to Treasury interest earned on incremental USDT float, not to mention the value of greater transaction fee capture over the longer term. While such a gambit may still be unsuccessful – and the ultimate incarnation of this move may leverage some foothold unrelated to WDK specifically – all the pieces are theoretically in place for the leading stablecoin issuer to take a greater share of the infrastructure on which it operates. In any case, the strategic trajectory here seems quite clear: public blockchains are becoming a feature toggle in a stack increasingly owned by issuers.

All of this makes sense given the superiority of database-like systems once a stack of intermediaries is assumed, as well as the blockchains’ well-documented issues with high-volume global payment throughput. But just as fundamentally, all the economic incentives for vertical integration by issuers skew this way as well. First, by insourcing the settlement layer, issuers can capture the fees that have till now leaked out to Ethereum, Solana, or Tron validators, effectively subsidizing someone else’s tech stack. This consideration will become especially critical as short-end interest rates compress – the clear top priority of incoming Fed Chairman Kevin Warsh – and the current “easy money” business model of collecting 4-5% on T-bills becomes less profitable. As rates fall, issuers will want to better monetize transaction volume, and the obvious first move in that direction is to stop leaking value to third-party infrastructure. Second, vertical integration offers control. As the stakes get higher, issuers will have less and less willingness to tolerate the long and winding road of blockchain consensus building, or to depend on the roadmaps and potentially disastrous technical decisions of third-party developers. Finally, competitive pressures will inexorably encourage this shift. In a race to the bottom on price, Issuer A will see their market share shrink if their users are stuck bearing the cost of volatile public gas fees while Issuer B offers free, instant transfers on a proprietary rail.

But what about…?

Critics might push back that such a shift toward proprietary walled gardens would sacrifice three key benefits of public blockchains: regulatory air cover, interoperability across issuers, and crypto ecosystem integration.

On the regulatory front, one might argue that outsourcing settlement to “neutral” public infrastructure gives issuers plausible deniability: they can claim they don’t control what users do with the tokens. However, this is manifestly untrue. Even putting aside the questionable label of “neutrality” to a blockchain governed in practice by a tight consortium with an established history of making decisions to benefit insiders, issuers absolutely retain control over balances, as attested by the litany of fund freezes we’ve discussed. Furthermore, in the context of an adversarial government, gesturing at an ostensibly “neutral” public chain to absolve an issuer of legal responsibilities is a thin excuse that we don’t imagine would hold much weight. On the flipside, if we assume a pro-stablecoin US Treasury bent on using these tokens to gain geopolitical leverage, then any legitimate lack of control would paradoxically be more of a bug than a feature. If the goal is to spread US-approved dollar hegemony, the government will undoubtedly demand greater influence in flows, more KYC, and granular permissioning (as should be clear from the compliance requirements imposed by the GENIUS Act). Adding layers of obfuscatory, “decentralized” governance considerations hinders rather than helps this supposed killer app of spreading government-sanctioned dollar dominance.

Elsewhere, skeptics may assert that in a world without robust public blockchains, the various issuers we’re emphasizing here will not have a clear shared rail on which to interoperate: if you have USDT and I want USDC, how will we pay each other without a common rail to link our respective issuers? But even putting our previously discussed issues with blockchain interoperability aside, a blockchain doesn’t magically solve the core “cross-issuer” problem because USDT → USDC is not simply a transfer, but rather a conversion of one issuer’s liability into another’s. Even in today’s blockchain paradigm, that conversion step is typically achieved through trusted, off-chain intermediaries (e.g. one or more custodians, exchanges, or PSPs debiting and crediting user balances as necessary); on-chain swaps via “decentralized exchanges” are achievable, but these still come with slippage risks, MEV opacity, and fee issues that we’ve discussed extensively (especially at scale). Either way, additional machinery is required for this conversion; it’s not something users and institutions seamlessly inherit just from “plugging into the blockchain.”

In practice, regardless of the settlement rail, the minimum primitives needed for true cross-issuer payment are: (1) liquidity to translate Issuer A’s liability into Issuer B’s at a known price; (2) a settlement / credit model that specifies who is taking risk during that conversion; (3) a common directory / routing scheme to identify and reliably reach the recipient’s endpoint; and (4) a common messaging standard to carry transaction instructions and complex metadata for compliance and commercial purposes. A blockchain can provide a limited solution to parts of (3) and (4) through global addressing, wallet-readable payment request formats, and naming layers like ENS, but as we saw in the “Interoperability” section above, blockchains lack many features needed to fulfill these requirements in an institutional context, and they end up adding to rather than replacing overall reconciliation surface. In the more issuer-centric world we’re contemplating here, cross-issuer interactions would therefore look less like “everyone sharing one chain” and more like what modern finance already does: governed networks and overlays that standardize participation, messaging, directories, and settlement conventions, while allowing each issuer to keep its own ledger.

More concretely, issuers A and B could join a common coordination layer, agree on rules and standards, connect to shared liquidity via market makers, and ultimately settle inter-institution dollar obligations in central bank money (directly or indirectly via settlement banks), all while abstracting this into a single “pay” button for a user. The previously discussed Circle Payments Network is an instructive early sketch of this direction: it is positioned as a coordination layer that orchestrates participant interactions and workflows (including long term support planned for a variety of stablecoins) by combining off-chain APIs and governed participation (CPN’s OFI / BFI model) to stitch together the various primitives we mentioned above. While CPN currently uses public blockchain settlement, its key mechanisms and primary benefits (governance, participant discovery, standardized information exchange, routing/liquidity coordination) are architected as a separate layer above that settlement; this modularity offers a plausible path for the same orchestration benefits to persist even if issuers progressively minimize reliance on public blockchains and shift more throughput toward proprietary ledgers. There will likely be several different possible approaches to cross-issuer interoperability, but the upshot is that a blockchain rail does not fundamentally solve the key issues at play here, and functional examples to address this question are already emerging.